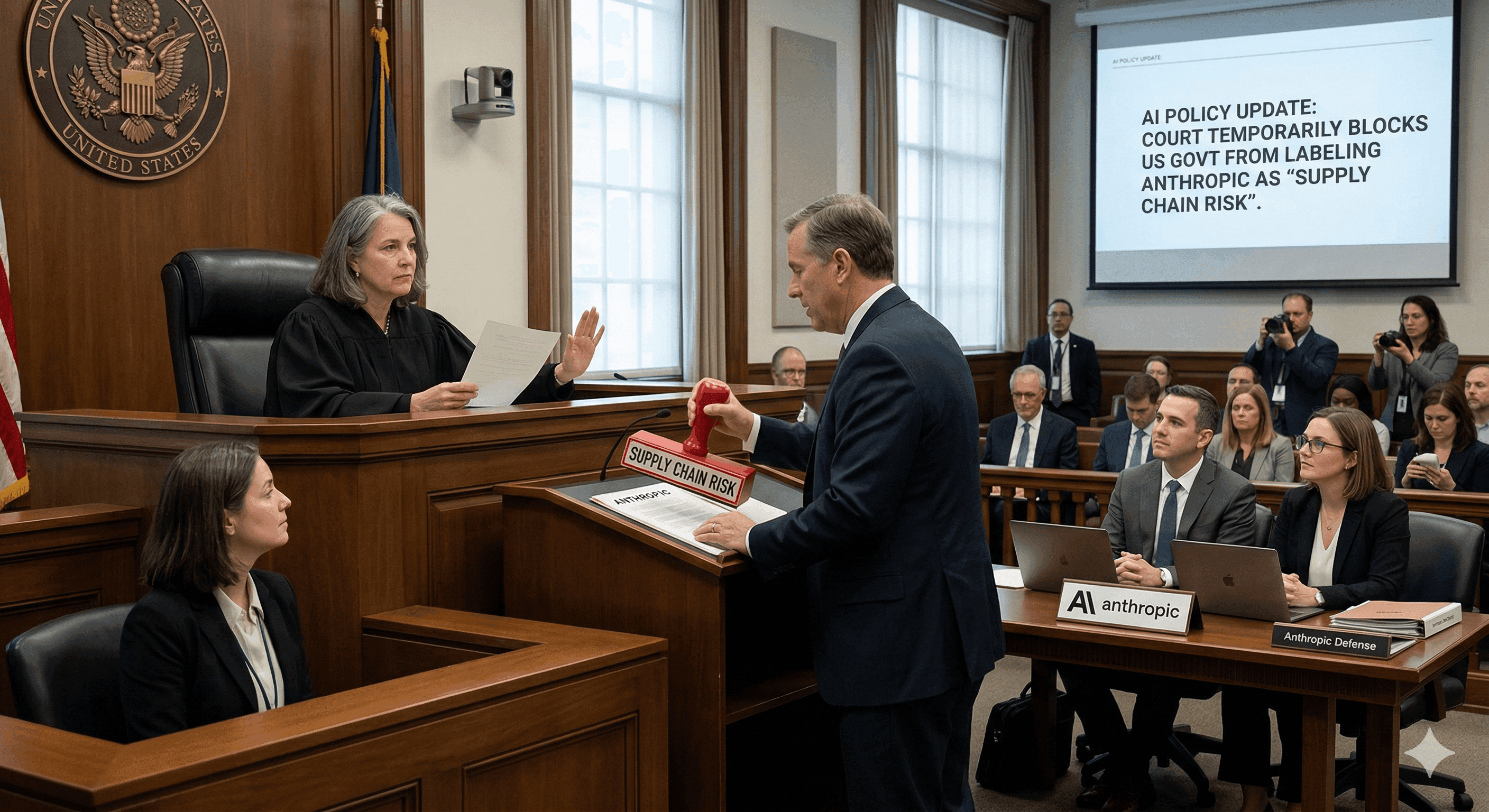

What Changed

The court has granted Anthropic’s request for a preliminary injunction, preventing the government from banning its products for federal use and from formally labeling it as a “supply chain risk,” at least for now. If you’ll recall, things turned sour between the company and the Trump administration when Anthropic refused to change the terms of its contract that would allow the government to use its technology for mass surveillance and the development of autonomous weapons. In response to Anthropic’s refusal, the president ordered federal agencies to stop using Claude and the company’s other services. The Defense Department also officially labeled it as a supply chain risk, which is typically reserved for entities typically based in US adversaries like China that threaten national security. In addition, department secretary Pete Hegseth warned companies that if they want to work with the government, they must sever ties with Anthropic. The AI company challenged the designation in court, calling it unlawful and in violation of free speech and its rights to due process. It asked the court to put a pause on the ban while the lawsuit is ongoing, as well. In a court filing, the Defense Department said giving Anthropic continued access to its warfighting infrastructure would “introduce unacceptable risk” to its supply chains. But Judge Rita F. Lin of the District Court for the Northern District of California said the measures the government took “appear designed to punish Anthropic.” Lin wrote in her decision that it seems Anthropic is being punished for criticizing the government in the press. “Punishing Anthropic for bringing public scrutiny to the government’s contracting position is classic illegal First Amendment retaliation,” she continued. The judge also said that the supply chain risk designation is contrary to law, arbitrary and capricious. She added that the government argued that Anthropic showed its subversive tendencies by “questioning” the use of its technology. “Nothing in the governing statute supports the Orwellian notion that an American company may be branded a potential adversary and saboteur of the US for expressing disagreement with the government,” she wrote. Anthropic told The New York Times that it’s “grateful to the court for moving swiftly” and that it’s now focused on “working productively with the government to ensure all Americans benefit from safe, reliable AI.” The company’s lawsuit is still ongoing, and the court has yet to issue its final decision. Judge Lin said, however, that Anthropic “has shown a likelihood of success on its First Amendment claim.”

This article originally appeared on Engadget at https://www.engadget.com/ai/court-temporarily-blocks-us-government-from-labeling-anthropic-as-a-supply-chain-risk-083857528.html?src=rss

Why It Matters Right Now

This update explains the practical impact for people using AI in real work, learning, and daily life.

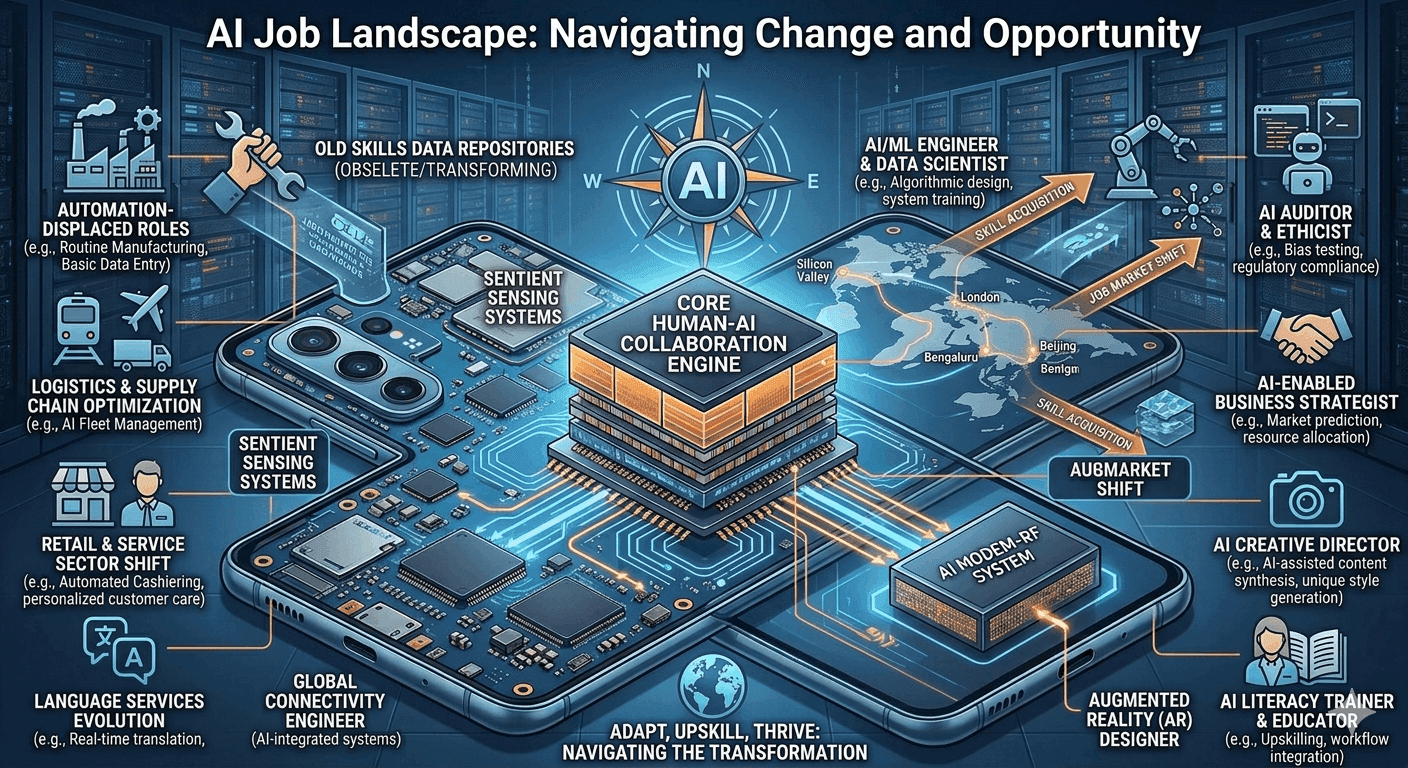

Who Should Use This

- Students and job seekers

- Freelancers and creators

- Office teams and small businesses

- Non-technical users exploring automation

Practical Use Cases

Student Use Cases

- Research summarization and study planning

- Mock interview practice

Business Productivity Use Cases

- Faster content drafting and reporting

- Repetitive task automation for teams

Daily Life Use Cases

- Weekly planning and personal task organization

- Simple assistants for writing and decision support

Action Checklist

- Pick one use case to test this week

- Track time saved versus your current workflow

- Keep only tools that improve real outcomes

Final Take

Focus on practical value, reliability, and cost before adopting any AI tool at scale.